Tags: Projects

Duke Nukem Forever

June 4, 2011 at 7:42 pm (CST)

131 comments

The Duke Nukem Forever demo is out. I can't even convey how surreal it is to me to be typing those words. But anyway, I've been poking around the demo for the last couple of days, and a new version of Noesis is up now that supports extracting 5 different variants of its included .dat packages.

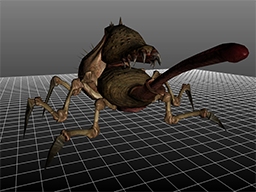

The Duke Nukem Forever demo is out. I can't even convey how surreal it is to me to be typing those words. But anyway, I've been poking around the demo for the last couple of days, and a new version of Noesis is up now that supports extracting 5 different variants of its included .dat packages.The picture is of the world's first (well, probably) right and proper DNF content mod, with Duke sporting his brand new Takei Pride tanktop. Noesis spits out all of the info you need to re-inject textures while it's extracting them, and I've already got a fully functional repacker that I intend to release sometime relatively soon. I've also finished hammering out the model format now, and Noesis v3.27 and later can view/export them.

The process of exporting the models is pretty simple, but requires a few steps. Here's a step by step of what you need to do.

1) Using Noesis, extract the contents SkinMshes/defs.dat, SkinMeshes/meshes.dat, and SkinMeshes/skels.def. By default, Noesis will want to extract these to separate folders. You don't want this, and instead you want to extract them all to a single folder. (e.g. "c:\steam\steamapps\common\duke nukem forever demo\SkinMeshes\allfiles")

2) Browse to the .msh of your choice in Noesis and open it.

3) If .skl or .def files cannot be found automatically you will be prompted for them. Additionally, Noesis will attempt to find MegaPackage.dat and TextureDirectory.dat relative to the path of the .msh file. If it cannot, you will be prompted for those files as well.

4) Export and enjoy. Note that materials are still a work in progress, and it can't automatically find the right materials in most cases. But certain models are working. (like Duke and one of the Holsom twins)

I've got model re-import/modification working, but the material situation limits it pretty severely. In the coming days/weeks, I'll be continuing to reverse that data and trying to figure out a reasonable process for replacing/overriding assets that doesn't involve rebuilding all of the game's packages.

Also, if you haven't heard yet, you can play the vehicle sequence in first-person. It's much nicer this way.

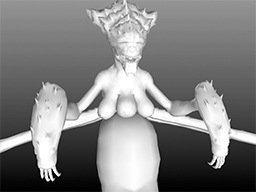

Update: I've successfully exported a model to COLLADA, modified it (re-weighted, added and modified geometry, modified the texture), re-exported it to .msh using my in-progress Noesis exporter, and got it to load in the demo. Have a look:

Wait for the scene with the twins.

Comments

131 comments in total.Post a comment

IP: 82.51.65.195

Grazia

August 26, 2011 at 3:09 am (CST)Good morning,

Firstly, I would like to say I'm sorry for writing a non relevant message to your blog . . .

I am writing from Italy, I found your contact info on a R4 mini sd that was given to my son ( 7 yrs old) . . . I am desperately trying to find an update for the software as the card does not work any more.

I understand that all I have to do is substitute the new "moonshell" folder for the old one . . . but . . . where can I find the new one? I have been downloading stuff but nothing seems to be what I'm looking for . . . .

Also I have a MacBook and what I find seems to work only on windows - which means I need to use my old Vaio . . . and it takes forever to do anything . . . can you help me? can you send me a link or something?

thank you soooooo much - you will make a 7 yr old very happy ( and a mother very happy)

my contact is grace_a@libero.it

thank you again for what you can do

Grazia

IP: 87.194.118.1

(GIT)r-man

August 19, 2011 at 12:10 am (CST)LOL edit :D

IP: 70.156.191.125

SnakePlisskenPMW

August 15, 2011 at 11:15 am (CST)I'm a crazy liar and I eat babies

IP: 87.194.118.1

(GIT)r-man

August 7, 2011 at 5:25 pm (CST)I was sort of hoping he'd take the bait and rant about how I spelt grammar wrong :P

Oh well, back to Borderlands mapping...thanks for your work on the DNF assets, appreciated by me anyway. :)

IP: 68.0.165.94

Rich Whitehouse

August 7, 2011 at 3:44 pm (CST)It's hard to tell if he's 12, an insane non-English-speaker, a mildly damaged troll, or some combination thereof. He definitely has no idea what he's talking about here, though, and will not be producing a usable dukeed.exe for DNF. Unless he becomes a knowledgeable, mature adult, then comes back and does it in another 10 years or so.

The only thing he seems to actually be capable of is spamming the hell out of me, and presumably trying to get attention in some perceived spotlight. (little does he know, there are only 5 people in the world that might actually ever bother reading any of this)

There I go making my frebulouse comments again.

IP: 87.194.118.1

(GIT)r-man

August 7, 2011 at 10:09 am (CST)Why all the hate?

...and poor grammer/spelling :D

IP: 74.163.169.155

SnakePlisskenPMW

August 7, 2011 at 9:22 am (CST)further more. i have been able to edit object class for such things like the basket ball. makeing it bounce 10 times harder. i have been able to exchange textures and UV texture wrapping for the models. i have been able to edit the models and replace then with other models for exsample a big boss model one might have, they would be able to aply this. further more i do all my work on the PS3 consel. and never layed hands on the PC file structure of the game. but i know threw discusstion with others that file formats are virtualy the same, just held in exsectuive jars of the PS3 platform.

further more...

haveing compiled DNF script into unrealED. takeing into content editor.u, dngame.u, engine.u, ect. and other object base unreal scripting- one can now edit events in game by changeing the way objects interact with each other...

mark my words. after im done with my project on the PS3. i will come back to all this and see how far every one has came....

if nothing has been done... i will gcc cmake up from scracth my very own dukeED.exe that boots up a UDK with pre compiled DNF prequisits. ( for two resons. 1: so those can finaly have something they can call a "dukED" and 2 so i can show how easy it is to make such a exscutable and present it in a video wicth is how every one was convinced there was a dukED.exe in the first place)

i dont know what else to say other then my email is PMWHOMEVENTS@AOL.COM email me what ever u wish....

rich.. the reson why i emailed you in the first place is becuse i see that you have the skill to get the job done. i admired your work. and i thought that i could seek your aid and work together on things... but instead you chose to make frebulouse comments about myself... none the less i could care less bro... it all just a dang shame....

IP: 74.163.169.155

SnakePlisskenPMW

August 7, 2011 at 9:13 am (CST)let me say some things to you SIR. if i was not so dame busy porting my own highly remastered version of GZDOOM to the PS3 CFW interface; i would make and release a video of MY development team and I, makeing and applying levels made with ( a specific ) UDK release.

there may not be a "interface" as you put it for a DNF editor. this "dukeED" as you put it. this is becuse there is no duke. is simply unrealED. i feel pitty for so meny people who take intreast in DNF modding, as i asumed if i figured it out surly others have, but it seems others have not. i would of thought by now that a smart guy like you Rich, would of figured it out by now.... the bottem line is. compiling the full set of editor unreal script packed in every single game into unrealED.

IP: 69.105.224.178

interested

August 6, 2011 at 8:24 pm (CST)shame you won't be able to complete this. (sad-panda)

hopefully someone will take up the torch with your info...

thanks for sharing all you have thusfar!

IP: 68.0.165.94

Rich Whitehouse

August 5, 2011 at 6:56 pm (CST)Well, I have to finish a project with CalTech, and immediately after that, there's a good chance I'll be moving to San Diego to begin working at Sony. I didn't expect myself to get this busy, or for random opportunities to keep throwing themselves in my face, so I don't see myself having time to do modding tools the Right Way at this point. I'm just gonna release what I know about the archive containers, and anyone that has the time/motivation can make the actual tools. Writing file injectors/rebuilders will be trivial for most formats, but that's not something I want to be responsible for myself, mostly due to the aforementioned "it mangles your core game files" reason. Someone else can have fun dealing with all of that fallout.

First thing to note is that most DNF archive formats store Unreal-encoded (variable-sized) values. They can be decoded with something like this:

http://pastebin.com/5Hue4Kft

Additionally, strings are often stored with an Unreal-encoded value at the start to dictate the byte count, and can be read with something like:

int nameLen = DNF_ReadEncodedMem(f); f->Read(dst, nameLen); dst[nameLen] = 0;I'll refer to those as "Unreal strings". From here, the format for each archive tends to be a bit different.

Static meshes .dat

--

Do a ReadEncodedMem at the start of the file to get the number of files. For each file, read an Unreal string, then a DWORD. The DWORD is the offset to the data in absolute file space. Size of each entry can be determined locally by checking the next offset, or the total file size in the case of the last file.

An easy way to handle injecting is to stomp directly over the data at the offset for the file being replaced. If the data you're injecting is larger, you'll have to append it to the end of the file and change the offset instead.

Sounds .dat

--

The header looks like:

typedef struct sndDirHdr_s

{

BYTE id[4]; //"KCPS"

int ver;

} sndDirHdr_t;

As with static meshes, number of files is an Unreal-encoded value at the start. Then, for each file, read 5 encoded values (path indices), 2 DWORDS (unknown), 1 encoded value, 1 int (offset to file data), and 13 more encoded values. After you've done this, you'll be at the paths section of the file.

In the paths section, read an encoded value to get the number of names. Now for each name, read an Unreal string. The path for each sound can be rebuilt by using the path indices (from the first batch of file info that you read) to index into this array. In this particular archive, I've found the first 2 entries tend to be...0, I think it was (going from memory here), so something like this tends to work out for building the full path name:

char fn[MAX_PATH];

fn[0] = 0;

for (int j = 2; j < 5; j++)

{

char *givenName = names[snd.path[j]];

strcat(fn, "/");

strcat(fn, givenName);

}

This gives you the offset and name for each sound in the archive. Injecting methods would be similar to the static meshes archive.

Texture directory .dat

--

This one is a bit complicated. The header looks like:

typedef struct texDirHdr_s

{

char id[4]; //"RIDT" or "RIDB"

int unkA;

int unkB;

int unkC;

BYTE numFiles;

} texDirHdr_t;

numFiles may be Unreal-encoded, can't recall if I tested results with that. After the header, the directory starts with a list of files that contain the actual texture data. For each file (up to numFiles in the header), read an Unreal string and a single int. This will give you file names to look for and open.

After reading the data file names, read an encoded value to get the number of textures. Now for each texture, read the following: int (number of names), encoded value * number of names (path indices), int (index for the appropriate data file), int (offset to texture data). If the texture directory's header is RIDB, also read an additional 9 bytes. Of those 9 bytes, the 4th and 7th bytes indicate the presence of sub-textures. So the 4th and 7th bytes being non-0 means the entry actually contains 2 separate textures. Once you've read all of that, you can organize it into a data structure along the lines of:

typedef struct texObj_s

{

char *name;

int nameNum;

DWORD infoOfs;

DWORD fileNum;

DWORD texOfs;

int idxList[MAX_TEX_FOLDERS];

int numSubFiles;

} texObj_t;

At this point in the file, it's time to read the names list. This is the same as the sound .dat. Read an encoded value to get the number of names, then an Unreal string for each name. Now you have an array of names to index into with your texture path indices.

Now, for each texture, loop through again referencing your texObj_t data. For each subFile (there is only 1 subFile is the header was not RIDB), build your full path name using the path indices (RIDB paths contain 4 entries for each subfile, otherwise use the nameNum field). Next seek to texOfs in the appropriate fileNum. For RIDB, read 2 ints (width and height of texture). The following data will be variable-sized, and 46 bytes in (if bit 1 is set on byte 44, skip another 2 bytes to 48 bytes in) will be the mip count, followed by mip offsets, for each sub-texture. In the case of non-RIDB, instead, 14 bytes in is the mip count, and then you have 3 ints (data offset, tex width, tex height) for each mip. In either case, you can now seek to the appropriate mipmap offset.

After seeking to the mip offset, read an Unreal-encoded value. This is the size of the mipmap data. Now you can read the actual texture data. In the case of RIDB, the first subFile should always be DXT5, and subsequent file(s) DXT1. For non-RIDB, read the 4th byte from texOfs. If it is 3, DXT1, and if it's 8, DXT5. You may also encounter special formats (like cubemaps) that require custom handling, but will likely still be DXT1 data.

And that's that. To inject data, the cleanest thing is to rebuild the texture directory with an addition file of your own making. You can write your mipsize+data binary into your own texture directory, and point texture directory entries to that file/offset instead.

Animations .dat

--

These start off with an Unreal-encoded value to dictate the number of files. Then for each file, read this structure:

typedef struct datBEntry_s

{

char name[128];

DWORD unkA;

DWORD ofs;

DWORD size;

int unkB;

} datBEntry_t;

And that's that. Very simple format. ofs is the offset to the data in the same file, size is the size of that file.

Skinned mesh, skeleton, and def .dats

--

These are identical to animation .dat files, but use this structure instead:

typedef struct datAEntry_s

{

char name[128];

DWORD ofs;

DWORD size;

} datAEntry_t;

Mega Package .dat

--

This is the biggest pain in the ass of all the files, as you'll likely want to rebuild the entire archive to modify it, and there may be checksum measures in place in the exe that you have to work around. (I never got around to testing this) The header is:

typedef struct megaHdr_s

{

BYTE id[4]; //"AGEM"

int ver;

} megaHdr_t;

After the header, read an encoded value to get the numFiles. Now, for each file up to numFiles, read this:

typedef struct megaEntry_s

{

DWORD unkA;

DWORD ofs;

DWORD size;

DWORD unkD;

DWORD unkE;

} megaEntry_t;

While reading an Unreal string after each megaEntry_t, for the filename of the entry. After this, all of the data is actually in a big list of compressed blocks. The compression is deflate (which means you'll want to use inflate to decompress the data). If you're unfamiliar with zlib and the inflate algorithm, refer to Google. Next read an Unreal-encoded value to get the number of blocks, and then for each block, read one of these:

typedef struct megaBlockInfo_s

{

DWORD ofs;

WORD size;

} megaBlockInfo_t;

Next, read an int (size of remaining compressed block data), and this point in the file will be your base offset for the block data. You'll probably want to do an ftell to store it into a local variable when parsing.

Now, you'll want to loop through for each megaEntry_t again. Each block in this format is 4096 bytes when uncompressed, and the ofs for each entry is stored in "uncompressed" address space. So in order to determine your starting block, you'll want to do something like:

DWORD blockIdx = e.ofs>>12; DWORD blockOfs = (e.ofs & 4095);blockIdx is an index into your megaBlockInfo_t array. Now for each entry, loop through decompressing blocks (don't forget to use the appropriate offset into the first decompressed block), and appending data to your decompression buffer until you've read megaEntry.size in decompressed bytes. Now you have the appropriate filename and decompressed data for each entry.

Injecting files into this archive, as mentioned, would be a pain. The easiest approach would probably be just adding your own compressed blocks onto the end of the blocks list, and referencing those, instead of even attempting to replace block data. It's probably best to rebuild the archive entirely, if you want to modify it. Though also as mentioned, to use a rebuilt archive in the game, you may have to spend some time in something like IDA Pro, to determine what you need to do in order to make the game happy about loading it.

That covers all of the archive types I looked into for DNF. Good luck rebuilding.

Previous ... 1 2 3 4 [5] 6 7 8 9 10 11 12 13 14 ... Next

Post a comment

Stream Tags

Projects

Everything

Personal

Analysis

Indie

Technology

AVALANCHE

R-Cube Engine

Modding

Game Design

Commercial

iPhone

Delight

Recent Comments

8326123 page hits since February 11, 2009.

Site design and contents (c) 2009 Rich Whitehouse. Except those contents which happen to be images or screenshots containing shit that is (c) someone/something else entirely. That shit isn't really mine. Fair use though! FAIR USE!

All works on this web site are the result of my own personal efforts, and are not in any way supported by any given company. You alone are responsible for any damages which you may incur as a result of this web site or files related to this web site.